OK, so it's not really about you. It's about me. I had to have something to pique your interest though, didn't I? I wrote this up for my team at work a while back, and I thought I would share it with the universe. So here it is, universe. Hope you dig it.

Creating software is fundamentally a creative act of communication. There are plenty of things to communicate about on any non-trivial software project:

- Customers and end users must communicate with business analysts and designers about their requirements for the system.

- Business analysts and designers must work with test engineers, and must use these requirements to describe the bar the system must clear to be acceptable to customers.

- The development team must understand this specification and communicate with designers, and with one another, so that they can clear that bar quickly enough to satisfy customers and deliver true value to them.

- And tons and tons of other stuff…

Poor communication can contribute real friction to a software project. And, as any major dude will tell you, friction can be a real drag. A primary goal of any software development team should be to reduce friction wherever it occurs in the development process.

In a traditional waterfall approach to managing software projects, stitching together each developer’s work into a single integrated project typically occurs towards the end of a development cycle. This step can be a long and unpredictable process, full of friction, and it can rapidly turn into a nightmare. If you think about it, this is really another communication issue: how do we communicate with one another about the work we have each done as individuals, and put it together so that it all works together seamlessly?

It is important to remember that creating software is a complex process, and there are no silver bullets to ward off a certain amount of complexity. But we should simplify things where we can. Adopting the practice of Continuous Integration (CI) is one step towards simplifying our software development projects. What can CI buy us when we use it in a disciplined way?

- CI can eliminate the need for a long, arduous, risky integration task at the end of a development cycle.

- If we combine CI with good automated unit testing and code coverage metrics, we can make our projects practically self-testing. A good suite of unit tests executing a high percentage of the code base on every integrated build can keep the quality of the code from deteriorating as the development cycle progresses.

- By working in short bursts and committing new code to the master build server often, defects that are introduced into the master build become easier to find and eliminate: you’ve only changed a small piece of code since the last time the system functioned properly, so you don’t have very far to look to find the defect you just introduced.

- Anyone on the team can get the latest functioning code, build it, and test it locally with minimum effort.

- At any time in the development cycle, everyone on the team knows precisely what works, what doesn’t, and where they are on progressing towards functional code for the iteration.

- CI can facilitate enforcement of coding standards.

- In short, the biggest umbrella benefits CI can buy us are better communication on the development team and reduced risk.

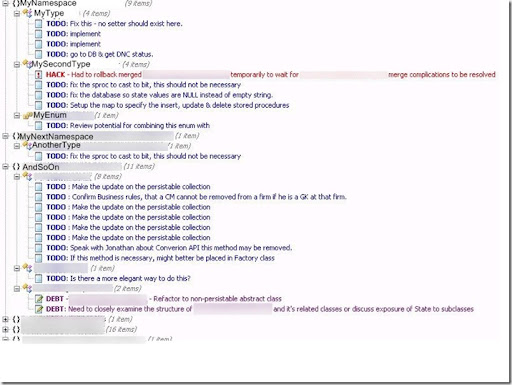

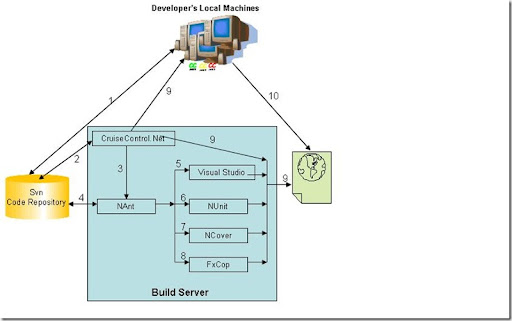

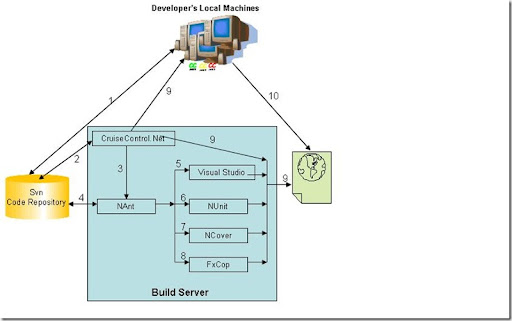

So what exactly is involved when working in a CI environment? What tools are needed? What do developers have to do differently? I know: reading a bunch of wordy blather[1] from yet another starry-eyed XP acolyte about how “this practice will change your life” is extremely tedious. So I drew you a picture. Lemme ‘splain:

CI Tools

This diagram is intended only to be an example of how a CI environment can be configured. There are many different ways to configure a CI server, and many tools you could use. In my diagram, I chose the following tools, some of which we already use at [my company]:

- CruiseControl.Net – the CI server software

- CCTray – A status notification tool for CruiseControl.Net

- SubVersion – our source control system

- Visual Studio – duh!

- NUnit – an open source .Net library for creating running unit tests

- NCover – an

open source .Net library for measuring the percentage of code the unit tests actually cover when testing. - FxCop – an open source .Net library for enforcing coding standards

Most of these tools are open source, which means they are free* - that's a big asterisk there; the licenses for some of these products are changing. You could choose others which are true third party commercial applications, supported by third party commercial software vendors. But there is a wealth of knowledge documented in the .Net community at large for a CI environment configured like this:

These are the steps:

- Individual developers on the team check new code into subversion.

- CruiseControl.Net checks the source code repository on regular intervals for newly checked-in code.

- When CruiseControl.Net detects new source code in the repository, it runs targets in the NAnt build script.

- NAnt uses the latest source code from the repository on the build machine.

- NAnt compiles the project using Visual Studio. If the project won’t compile, the NAnt script exits with a failure message, and CruiseControl.Net delivers a failure message to developers via CCTray.

- NAnt runs all the unit test fixtures using the NUnit framework. If any individual test in any test fixure fails, the NAnt script exits with a failure message, and CruiseControl.Net delivers a failure message to developers via CCTray.

- NAnt uses NCover to measure the percentage of code executed by the NUnit test fixtures. You can set a coverage threshold as a failable build step: if the coverage percentage does not meet the stated coverage standard, say 85%, the NAnt script exits with a failure message, and CruiseControl.Net delivers a failure message to developers via CCTray.

- NAnt uses FxCop to check the code for compliance with coding standards. If it finds any non-compliant code, the NAnt script exits with a failure message, and CruiseControl.Net delivers a failure message to developers via CCTray.

- Once the NAnt script finishes all its tasks, it exits with a success or failure message. CruiseControl.Net delivers the message to developers via CCTray. At the same time, CruiseControl.Net creates web pages on the build it just completed containing information from each step in the build process.

- Members of the development team can check these web pages to see details on the build results.

There are a lot of things that happen automatically in the scenario I described above. Running an environment like that is like having a whole extra person whose job is to put everybody’s code together, regression test every build, check everybody’s code for compliance to coding standards, and report the status of all that back to everyone on the team; but you don’t have to increase your Mountain Dew budget for this extra person.

However, like just about everything else in life, you will only get out of CI what you put into it. You have to approach CI with a certain discipline, and that means developers have to do things a little bit differently. But the cost to developers is small, and the benefit to the quality and progress of the project is great.

Automate the Build

Somebody has to champion the task of creating the CruiseControl.Net configuration and the NAnt scripts. These are not trivial tasks. But once the first set of config files and build scripts have been created, they can be extended for new build tasks and used as templates for other projects. Ideally, the build server should be looking for newly checked-in code in a very short feedback loop, say a range from every 60 seconds to every 10 minutes. Structure the automated build process such that it performs tasks which are important to the development team, and reports on the results of those tasks.

Write Unit Tests

Test your code. First write tests for your code, then write some more tests, and finally write some tests. And while you’re at it, write some tests. I cannot stress enough how valuable unit testing will be when combined with a CI environment. Test fixtures and test coverage metrics will be the safety net you rely on to tell you the health of the code as you move through the development cycle. Testing and testability should be first class citizens among all the considerations involved in the design process. Every developer on the team should provide test fixtures for their code, and the results of these tests should be viewed as a measure of the health of the code. Decide on a code coverage standard early (85% isn’t bad), and enforce it throughout the development cycle.

Commit Early and Commit Often

A developer’s attitude towards committing code to source control should be much like Al Capone’s attitude towards voting: do it early and often. The quicker you can get code onto the build server, the quicker you know your code will integrate with everyone else’s code.

Do the Check In Dance

Didn’t know this was going to be a dance lesson, did you? Jeremy Miller, the Arthur Murray of programming, has documented what he calls “The Check In Dance”[2]. And it goes a little something like this:

- Let the rest of the team know a change is coming if it's a significant update.

- Get the latest code from source control.

- Do a merge on any conflicts.

- Run the build locally [using the same build script as the build server],[3] and fix any problems found.

- Commit the changes to source control.

- Stop coding until the build passes.

- If the build breaks, drop everything else and fix the build.

Stupid Developer Tricks

Speaking of Jeremy Miller, he has also documented some good general tips for developers to follow in a CI environment. It is worth quoting him at length:

- “Check in as often as you can. Try to reach stopping points as often as you can. This goes back to the basic agile philosophy of making small changes and immediately verifying the small change. When you're doing Test Driven Development you strive to keep ‘Red Bar’ periods as short as possible. The same kind of thinking applies to code check-ins. Make small changes and see the impact on the rest of the code immediately. Merging code will be less painful the more frequently a team integrates their code.

- Avoid stale code. If you have to keep code out for any length of time, make sure you are getting everyone else's changes. Try really hard not to keep code out overnight. If you're using shared developer workstations, put some sort of sign on the workstation that there is outstanding code on the box. I've seen several XP zealots swear that they'll throw away any code left overnight. Personally, I think that's just a silly case of ‘I'm more agile than thou,’ but it's still a bad idea to leave code out overnight if you can help it.

- Don't ever check into or out of a busted build. Checking in might make it harder to fix the build because it will cloud the underlying reason for the build, and you can't really know if your changes are valid.

- Communicate and negotiate check-ins to the rest of the team. Frequently the complexity of a merge can be dependent upon who goes first. Some teams will use some kind of toy as a ‘check in token’ to ensure that there is never more than one set of updates in any CI build. Pay attention to what the rest of the team is doing too.

- If you're working on fixing the build, let the rest of the team know.

- DON'T LEAVE THE BUILD BROKEN OVERNIGHT. That's also an occasional excuse to your wife on why you're home late from work. Use with caution though.

- Not every member of the team needs to be a full-fledged ‘Build Master,’ but every developer needs to know how to execute a build locally and troubleshoot a broken build. If you're suckered into being the technical lead, make sure every team member is up to speed on the build.

- The best practice for effective CI is to perform the integration on a developer workstation before that code escapes into the build server wild. It's okay to break the build once in awhile. One of my former colleagues used to say that the CI build should break occasionally just to know it's actually working. What's not okay is to leave the build in a broken state. That slows down the rest of the team by preventing them from checking in or out. Even worse, somebody might accidentally update their workstation with the broken build and get into an unknown state. If you follow these dance steps, you can minimize build breaks and run more smoothly. Besides, it's embarrassing to have the ‘Shame Card’ on your desk.”

As I said above, there are no silver bullets for much of the complexity we face on software development projects. Continuous Integration is certainly not a silver bullet; but when used effectively, it can indeed improve communication and reduce risk significantly. As Martha says, “Continuous Integration: it’s a Good Thing.”

[1] My wife often accuses me of being tedious and pedantic, particularly because I tend to use words like “tedious” and “pedantic”.

[2] Jeremy Miller, http://codebetter.com/blogs/jeremy.miller/archive/2005/07/25/129797.aspx, 2005

[3] I added the [bracketed text].